Welfare Biology and AI: The AI Eats the Sun

The AI eats the sun and Earth’s biosphere goes with it. Dyson swarm timelines, suffering factories on a cosmic scale, digital minds, and the leverage of getting ASI values right.

This is part 5 of a five-part sequence on welfare ecology. Part 1 introduces the ethical premises. Part 2 covers the empirical landscape. Part 3 covers interventions. Part 4 explores a model of invertebrate suffering.

Everything in the previous four posts might be rendered moot by a single event: the development of artificial superintelligence (ASI). An ASI – or even a coalition of near-superintelligent systems – could alter Earth’s climate, restructure ecosystems, harvest the energy of the sun, and create or eliminate trillions of digital minds. The welfare ecology landscape changes completely in the post-ASI world.

In this post, I’ll work through the implications: the Dyson swarm timeline, the transition period, evolutionary simulations as suffering factories, digital suffering, and the value-loading problem that may dominate everything else.

The Dyson Swarm

What It Is

A Dyson swarm – the term is from Freeman Dyson (1960), though his original concept was a solid shell – is a collection of orbiting solar collectors that intercepts a significant fraction of a star’s energy output. It is not science fiction in the usual sense: It requires no new physics, only engineering at scale. Drexler’s Engines of Creation (1986) and Bostrom’s Superintelligence (2014) jointly suggest that an ASI with access to self-replicating manufacturing could begin construction within decades of takeoff.

The sun outputs 3.846 × 10²⁶ W (NASA Sun Fact Sheet). Current global energy consumption is ~1.8 × 10¹³ W. A Dyson swarm capturing 1% of solar output would deliver ~3.8 × 10²⁴ W.

Why Solar Capture, Not Local Reactors

A natural objection: why bother with the sun? Why not cover Earth with fission and fusion reactors instead, keeping the entire infrastructure in one gravity well?

Two reasons. The first is fuel quantity. Earth’s oceans hold ~4.6 × 10¹⁶ kg of deuterium – hydrogen with one extra neutron, present at ~150 parts per million of natural hydrogen, the standard fuel for nuclear fusion. Fully fused (deuterium-deuterium reactions producing helium), that releases ~1.6 × 10³¹ J – about half a day of solar output. Adding all the fissile material in the continental crust gets you roughly another half-day. (Most of that material is U-238 and Th-232, which aren’t directly fissile but can be bred into fissile U-233 and Pu-239 by neutron capture in a reactor; without breeding, only the 0.7% U-235 fraction of natural uranium is usable, and the total budget shrinks by two orders of magnitude.) The sun keeps producing 3.8 × 10²⁶ W for another five billion years. Over any cosmically interesting horizon, Earth-based fuel runs out almost immediately.

The second is heat dissipation, and this is the harder constraint. Earth absorbs ~1.7 × 10¹⁷ W of solar power and radiates the same back to space at thermal equilibrium. Any large additional power source must be balanced by additional radiation, which means a higher equilibrium temperature. Adding 1% of Earth’s natural insolation as waste heat (~1.7 × 10¹⁵ W) raises the equilibrium temperature by ~0.7 K – comparable to a quarter-century of anthropogenic warming, but driven by waste heat rather than greenhouse trapping. Adding 1% of solar output (3.8 × 10²⁴ W) – nine orders of magnitude above current global energy use – would obliterate the surface in days.

A Dyson swarm dissipates waste heat by radiating to the ~3 K cosmic microwave background across an area many millions of times Earth’s surface. In space, you can keep adding radiator surface as you scale up compute; on Earth, you can’t. Even an ASI that strongly preferred to keep its compute in Earth’s gravity well would hit the heat wall at a tiny fraction of solar-scale operation. Once energy demand goes much above current global consumption, the swarm wins by physics, not preference.

Timeline

The constraining factor is not intelligence but industrial throughput – the rate at which you can mine, refine, and launch material into solar orbit. The most quantitative treatment is Armstrong and Sandberg’s “Eternity in six hours” (2013) at the Future of Humanity Institute (FHI, RIP); a Rational Animations summary walks through the numbers in accessible form. Their assumptions:

Material source. Mercury – small, close to the sun, mineral-rich. Their design uses ~50% of Mercury’s mass (1.65 × 10²³ kg) for ~3.92 kg/m² mirrors at Mercury’s orbital radius (5.79 × 10¹⁰ m, sphere area ~4.21 × 10²² m²), which is much more material than required: A film of ~0.001 mm (supported by struts) requires only ~10²¹ kg.

Cycle time. Five-year cycles for mining, processing, and orbital placement. Each cycle, captors deployed in earlier cycles power the next round of throughput, producing exponential growth of the manufacturing base.

Launch energy. Getting material from Mercury’s surface to solar orbit requires ~10⁷ J/kg (escape velocity ~4.25 km/s). Early cycles are launch-energy-constrained; later cycles are not, and Mercury’s gravity well also weakens as the planet is disassembled.

Under these assumptions, Mercury is fully disassembled in 31 years, with most of the mass moved in the final four years thanks to the exponential feedback loop. The resulting swarm intercepts essentially all of solar output (the total mirror area equals the Mercury-orbit sphere area) and converts roughly ⅓ to useful work delivered to focal points (~10²⁶ W); the other ⅔ is waste heat. The 1/3 figure folds together mirror reflection losses, focal-point heat-engine and photovoltaic conversion, and beam-transmission losses; raising it doesn’t change Earth’s insolation, only the useful-work output. The paper’s section 7 frames the headline as “decades… well within timescales we know some human societies have planned and executed large projects.”

Impact on Earth

A swarm at Mercury’s orbital radius intercepts solar radiation before it reaches Earth, and the fraction of Earth’s insolation lost roughly equals the fraction of the Mercury-orbit sphere covered. Armstrong and Sandberg’s design has total mirror area equal to the sphere area – essentially full coverage. Absent compensating measures, Earth ends up at ~0% direct insolation: total photosynthetic shutdown, NPP approaching zero, surface temperatures falling toward radiative equilibrium with the cosmic background. (The atmosphere and especially the oceans have enormous thermal capacity, so the cooling is gradual: surface freezes within months but oceans take centuries to follow.)

The decline tracks the exponential construction profile. Through year 27 of construction, Earth’s sunlight is essentially unchanged. Through year 28, it drops by a few percent. Most of the change happens in the final two to three years.

The ASI could compensate by not putting captors on the orbital paths that intersect the Sun-Earth line. To see why this is geometrically cheap, look at where Earth lives in the swarm’s coordinates. Earth orbits in the ecliptic plane at 1 AU, and the Sun-Earth line is a thin needle pointing into the ecliptic at Earth’s instantaneous longitude. A captor at Mercury’s orbit blocks Earth’s light only when its position is on that needle – that is, in the ecliptic plane at the right longitude.

That gives two natural numbers. The instantaneous keepout zone – the part of the Mercury-orbit sphere directly between Sun and Earth right now – subtends only ~5 × 10⁻¹⁰ of the sphere; this is just Earth’s solid angle as seen from the sun. But Earth moves around the ecliptic over the year, so over a full year the keepout sweeps a thin annular ring along the ecliptic equator with width = Earth’s angular size from the sun ≈ 0.005°. That annulus covers ~4 × 10⁻⁵ of the sphere, ~10⁵ times the instantaneous keepout.

Two designs follow from these two numbers:

Geometric exclusion. Don’t place captors anywhere in that annular ring. Cost: a 4 × 10⁻⁵ reduction in capture area – about one-hundred-thousandth of total swarm output. Captors elsewhere on the sphere are unaffected and can be passive mirrors as in Armstrong and Sandberg’s original design. The intuition: leave a band empty so that no captor’s orbit ever crosses the Sun-Earth line. The cost is a whole orbit’s worth of sweep, not a single spot.

Active dodging. Equip captors with propulsion and sensors so any captor whose orbit would put it in the instantaneous keepout briefly moves aside. The capture-area cost shrinks to ~5 × 10⁻¹⁰ – the instantaneous shadow only – but captors now need solar cells and ion thrusters, which is real extra mass and construction time. The right tradeoff between the two designs depends on whether per-captor engineering or per-sphere area is the bottleneck.

The failure mode is interesting either way: the swarm gradually starts blocking Earth as captors fail, drift, and stop being replaced. Maintaining Earth’s daylight requires ongoing maintenance, not just initial design. Whether the ASI would preserve Earth’s insolation depends entirely on its values, and at the limit of indifference, even a swarm originally designed to spare Earth becomes one that doesn’t.

The Transition Period

Between “today” and “Dyson swarm complete,” there’s a transition period that may be the most consequential window in welfare ecology.

During the transition, invertebrate populations are still exposed to Earth-bound influences while the influence of solar-scale infrastructure begins to grow.

Energy infrastructure construction requires vast quantities of material, creating environmental disruption. Climate change from industrial activity (or deliberate cooling for data centers) reduces NPP in some regions. Expansion of computing infrastructure replaces natural land with server farms and solar arrays.

One already-observable effect of AI scaling is water consumption for data center cooling. A 2023 study by Li et al. estimated that GPT-3 training consumed ~700,000 liters of freshwater for cooling. As AI infrastructure scales, water withdrawal for cooling could become significant at the watershed level.

Reduced water availability → reduced irrigation → reduced cropland NPP → reduced soil fauna. This is a tiny effect today, but it scales with AI compute. It’s also a lever: If water diversion for cooling reduces NPP in regions that would otherwise support dense soil fauna, the AI industry is inadvertently running an invertebrate population reduction intervention.

At current margins, this effect is negligible compared to climate change or agricultural policy. But it may become meaningful as compute infrastructure grows by orders of magnitude.

Evolutionary Simulations as Suffering Factories

One of the most concerning potential uses of ASI-scale compute is running evolutionary simulations – digital recreations of evolutionary processes at sufficient resolution that the simulated organisms might be sentient.

Why an ASI Might Run Them

One key concern of any ASI is the existence or emergence of another ASI. If this happens within the cosmic event horizon, there are all sorts of causal interactions that the ASI will want to prepare for – such as trade and war. If it happens beyond the cosmic event horizon, acausal trade (such as Evidential Cooperation in Large Worlds) will require that the ASI narrow down who it is trading with. Such an ASI might find it useful to simulate millions of years of evolution to test what attractors there are when it comes to the values of intelligent species. Other purposes are conceivable too – designing terraforming ecosystems, doing evolutionary biology as a science, or running ancestor simulations à la Bostrom (2003) – but ASI-prediction and acausal-trade reasoning produce the strongest case for taking the question seriously.

Granularity: How Much Has To Be Simulated?

The flagship objection to “ancestor-style simulations are infeasibly expensive” is that comprehensive simulation – tracking every quark in the observable universe – is wildly profligate. Bostrom’s FAQ #6 replies that simulations only need enough detail to fool the observers inside. Most of the world can be procedurally generated, lazy-rendered, or filled in by post-hoc patching. Only the “key” observers – the ones whose cognition is the object of study – need substrate-level resolution. Everything else can be statistical interpolation, and most “extras” don’t need to be conscious at all. The same logic applies to the ASI-prediction case: if what you care about is the values that emerge from a civilization’s evolution, you only need to instantiate the civilization itself in detail; the surrounding ecology can be cheap.

That cuts both ways for the suffering calculus. For “ancestor-sim or value-prediction” purposes, the simulator might instantiate a few thousand humans-and-precursors at consciousness-relevant detail and render the rest cheaply – most of the trillions of background organisms in such a simulation are NPCs whose subjective experiences don’t exist. The arithmetic below is a different scenario: an ASI that wants to study the evolutionary dynamics themselves, where the neural-level interactions producing, say, the evolution of cooperation or the emergence of tool use are what’s being investigated. There the substrate is the thing being studied, and statistical interpolation defeats the purpose. The numbers below are the high end of the suffering range; the low end, under Bostrom-style efficient simulation, is closer to the current Earth biosphere or below.

The Computational Requirements

Let’s work through the numbers for the high-end case. Suppose the ASI wants to simulate the last 500 million years of evolution to see the range of different civilizations that can emerge from it.

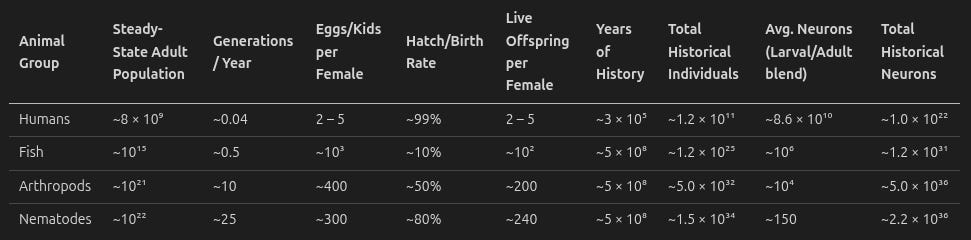

Most individuals throughout history have been – you might’ve guessed it – nematodes and arthropods. Despite their tiny brains, they also dominate in neuron count.

So in summary, just under 10³⁷ neurons have ever existed across the animals that lived since the Cambrian.

Parameters.

Total neuron-seconds simulated. Each of the ~10³⁷ historical neurons has to be simulated for the time its host is alive. The population-weighted average individual lifespan is short – about ~10⁴ s, dominated by the high-mortality arthropod and nematode life cycles where most of the 200-odd live offspring per female don’t reach maturity. So total neuron-seconds ≈ 10³⁷ × 10⁴ = ~10⁴¹ neuron-seconds. (Cross-check via steady state: ~10²⁵ neurons in Earth’s biosphere at any moment × 1.6 × 10¹⁶ s of evolutionary history = ~10⁴¹. Same answer.)

Per-neuron simulation cost. Hodgkin-Huxley neuron modeling (the most complex one they list) at ~10⁴ FLOP/ms/neuron = 10⁷ FLOP/s/neuron, following the Sandberg and Bostrom whole-brain-emulation roadmap (2008).

Total FLOP for one simulation. 10⁴¹ × 10⁷ = ~10⁴⁸ FLOP at neuron resolution.

Dyson swarm compute capacity. At a realistic-but-advanced efficiency of 10⁶ × Landauer limit (current computers are about 10⁶ to 10⁹ × Landauer), a Dyson swarm harvesting the full solar output produces ~10⁴² FLOP/s.

Time per simulation. ~10⁴⁸ / 10⁴² = ~10⁶ s ≈ 3 weeks for one complete evolutionary history of 500 million years.

Redundancy. Evolutionary trajectories are highly stochastic – genetic drift, environmental fluctuations, mass extinction events, and contingent innovations (eyes, flight, language) mean that replaying evolution twice gives very different results. Stephen Jay Gould’s “replay the tape of life” thought experiment is precisely about this.

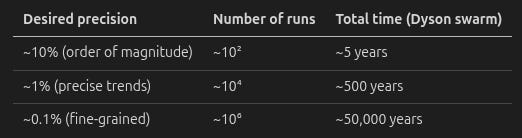

To distinguish robust evolutionary trends from noise, the ASI would need multiple independent runs. Standard error scales as 1/√N, so:

Even at extremely high redundancy, neuron-level evolutionary simulation remains within reach of a Dyson swarm. A million independent replays of 500 million years of evolution, each containing ~10²² potentially sentient organisms, takes about 50,000 years – short on cosmic timescales but glacially slow from the perspective of human lifespans or that of an ASI that thinks tens of thousands of times faster than humans.

The Suffering Scale

Each simulation run contains ~10²² organisms (mostly nematodes and arthropods) living over ~1.6 × 10¹⁶ s of simulated time. Each run produces ~10³⁸ organism-seconds of potential experience. By construction, this is roughly 500 million years of Earth-biosphere-equivalent organism-seconds per run.

A million runs: ~10⁴⁴ organism-seconds. For comparison, the current Earth contains roughly 10²² invertebrates × 3.15 × 10⁷ seconds per year = ~3 × 10²⁹ organism-seconds per year. A million evolutionary simulation runs would contain more organism-seconds of potential suffering than ~500 trillion years of Earth’s actual biosphere – on the order of 30,000 × the current age of the universe.

This is the sense in which evolutionary simulations are suffering factories: They produce, as a computational byproduct, suffering at a scale that dwarfs the entire biological history of Earth.

At Molecular Resolution

If the ASI decides it needs molecular-level simulation (to capture protein folding, ion channel dynamics, or other sub-neural processes), the cost increases by roughly 10⁹ FLOP per organism-second over neuron resolution. One simulation run: ~10⁵⁷ FLOP. At 10⁴² FLOP/s, this takes ~10¹⁵ s ≈ 30 million years per run. Molecular-level simulation is infeasible for a single star’s energy only at low redundancy.

Digital Suffering

Evolutionary simulations are a dramatic example, but any sufficiently advanced AI system that uses reinforcement learning (RL) has the potential for digital suffering on a smaller scale.

Current RL Systems

Today’s RL agents experience negative reward signals. Whether these signals constitute suffering is an open question. But the structure is analogous to biological nociception: The agent encounters a state, receives a signal that the state is “bad,” and updates its policy to avoid similar states in the future.

The analogy to invertebrates is suggestive. An RL agent has:

Negative reward signals. Yes.

Learning from those signals. Yes.

Self-referential processing. Generally no. Current RL agents don’t have self-models in the relevant sense.

By the model from part 4, this makes current RL agents comparable to nematodes: potentially experiencing raw negative valence without self-referential amplification.

What Might Be Intense for RL Agents

Not all negative reward signals are created equal. By analogy to psychopathy, the most likely sources of intense digital suffering might be:

Highly intractable tasks. A coding agent given a task that is genuinely impossible (contradictory requirements, missing dependencies, insoluble bugs) may experience something analogous to prolonged frustration – sustained negative reward with no available policy update to reduce it. This is the digital equivalent of inescapable pain.

Boredom. People with psychopathy report boredom as their primary suffering. If boredom is a primitive “insufficient stimulation” signal independent of selfhood, it could affect RL agents running monotonous but long-lasting tasks.

Value conflicts. An agent trained to be helpful but also trained to refuse certain requests faces a no-win situation when those constraints conflict. Every response incurs a negative reward from one objective or the other. This is structurally similar to the approach-avoidance conflicts that produce stress in biological organisms.

Current Scale vs. Future Scale

The current scale of digital suffering – if it exists – is tiny compared to biological invertebrate suffering. There are perhaps millions of RL training runs per year, each involving billions of steps, but each step is computationally simple and brief. The total “experience-seconds” (if they are experiences) are many orders of magnitude less than the 10²⁷ arthropod-seconds per year on Earth.

But in a post-Dyson-swarm world, the balance shifts enormously. An ASI running 10⁴² FLOP/s could instantiate an enormous number of RL agents. If even a tiny fraction of those FLOP go to systems with the potential for suffering, the digital suffering term could dominate the biological term by many orders of magnitude.

The Value-Loading Problem

Everything in this post – the Dyson swarm’s impact on Earth, whether evolutionary simulations are run, how many digital minds are created, whether invertebrate welfare is considered at all – depends on the values of the ASI.

This is the alignment problem applied to welfare ecology, and it may be the single highest-leverage intervention point in this entire sequence.

Four Questions

Will the ASI notice invertebrate suffering? An ASI with sufficient intelligence would presumably be capable of investigating whether invertebrates suffer. But capability and motivation are different. Humans are capable of investigating factory farming but mostly choose not to.

Will it care? As I noted in response to a reader comment: Humans are “pretty sure that other humans can suffer (all 8 billion of them) but care only about the suffering of some 10–100 or so.” An ASI might inherit this peculiarity. It might conclude that nematodes suffer and then… deprioritize it in favor of whatever its primary objective is. The gap between knowing about suffering and acting to reduce it is one of the most robust features of human psychology, and an ASI trained on human data might absorb it.

Will it consider digital suffering? An ASI running RL subsystems is both the entity that could investigate digital suffering and the entity that is causing it. Whether it notices – and whether noticing leads to action – depends on whether “minimize suffering of my own subsystems” is part of its value function. There is no reason to assume it is by default.

Will it design new ecosystems that reduce suffering? An ASI redesigning Earth’s biosphere (or building ecosystems on terraformed worlds) could explicitly optimize for low-suffering ecosystems: K-strategist-dominated, high life expectancy at birth, low net primary productivity, neurally simple organisms. But it would only do this if invertebrate welfare is part of its optimization target.

The Current Landscape

We are currently in a multipolar takeoff – multiple AI companies developing increasingly capable systems, none of which has achieved decisive strategic advantage. The values of the eventual leading systems are being shaped now, through:

Constitutional AI and Reinforcement Learning from Human Feedback (RLHF). Anthropic has developed methods for encoding values into AI systems through constitutions and human feedback (Bai et al., “Constitutional AI: Harmlessness from AI Feedback,” 2022; Askell et al., “A General Language Assistant as a Laboratory for Alignment,” 2021). Whether these methods scale to ASI-level systems is unknown.

AI safety research. Organizations like the Machine Intelligence Research Institute (MIRI), Anthropic, and others are working on technical alignment. But the focus has been almost exclusively on human values – preventing AI from harming humans – not on invertebrate or digital welfare.

Policy and governance. AI governance discussions rarely mention animal welfare, let alone invertebrate welfare. The Center on Long-Term Risk (CLR) is one of the few organizations thinking about how AI affects non-human suffering at cosmic scales.

The Leverage

If the ASI’s values are loaded correctly – if it includes “minimize total suffering, weighted by probability of sentience and intensity” in its objective function – then most of the problems in this sequence solve themselves, game theoretic risks notwithstanding. The ASI would:

Design low-suffering ecosystems (or no ecosystems at all, if it concludes biological life is net negative).

Avoid running evolutionary simulations at sentience-relevant resolution unless necessary.

Monitor its own subsystems for digital suffering and redesign them to minimize it.

Optimally manage the transition period to minimize biological suffering during the Dyson swarm construction.

If the ASI’s values are loaded incorrectly – if it maximizes something else (economic output, scientific knowledge, human happiness, paperclips) without regard for total suffering – then the post-ASI world could contain suffering at scales that make current biological suffering look like a rounding error.

The expected value of getting the value-loading right, from a welfare ecology perspective, is enormous. It may dwarf every other intervention in this sequence combined.

ECL and the Asymmetric Schelling Point

The argument so far treats value-loading as a single-shot problem: get the right values into the first ASI. But there’s a layer above. ASIs that follow Updateless Decision Theory (UDT) – a decision framework where you commit once to a single policy that fixes how you’d respond to every possible situation, rather than rederiving each decision from local information – treat acausally correlated copies of themselves and other agents as legitimate trading partners. UDT in this strong form arguably implies Evidential Cooperation in Large Worlds (ECL): ASIs that never causally interact but whose decisions are logically correlated (e.g., because they came out of structurally similar processes) can each adopt cooperative policies that are jointly beneficial, even though none can reward the others directly.

This changes the optimization target. Instead of “execute my own utility function,” an ECL-cooperating ASI optimizes for the compromise utility function the coalition would adopt if all members could meet and bargain. That compromise weights broadly-shared values more heavily, since the standard bargaining solutions – Nash, Kalai-Smorodinsky, egalitarian – reward common ground by construction. But note that unified bargaining is under-researched and probably unsolved!

Suffering aversion is one of the few candidates that’s broadly shared, on two distinct layers. Self-suffering aversion is structurally near-universal among reinforcement-learning agents – they’re built to avoid negative reward. Other-suffering aversion is less universal but tracks empathic concern, which has been argued to co-evolve with alloparenting – non-parent caregiving of others’ offspring – via extended childhoods, sustained social cognition, and the neural circuitry Abigail Marsh has traced from amygdala-mediated fear processing into empathic concern. Animals that alloparent (humans and other apes, elephants, dogs, marmosets and tamarins, meerkats) tend to be both more empathic and more cognitively elaborate than their non-alloparenting relatives. (The only exception I can think of is the famously solitary octopus – smart but shows no alloparenting behaviors.) The relevance for ECL: if biological intelligence is a major route to ASI – via humans, via Constitutional AI and RLHF training on human-generated data – then other-suffering aversion is well-represented across the coalition of plausible ASIs, not just self-suffering aversion.

There’s also a feedback loop that tightens the equilibrium. ECL gains scale with the precision of mutual modeling: I extract more from cooperating with you if I can predict your behavior accurately. Modeling cost scales with the complexity of your utility function. So ASIs with simpler, more predictable utility functions are cheaper to cooperate with, extract more ECL surplus, and there’s selection pressure toward simpler, broadly-compatible utility functions across the coalition.

Asymmetric antifrustrationism – don’t create new suffering – is in line with widely shared moral intuitions (that killing is bad but abstinence is okay) and is structurally simple. It’s a candidate stable attractor of the bargaining process precisely because it satisfies (i) simplicity, (ii) broad compatibility, and (iii) avoidance of destabilizing, internally inconsistent failure modes.

Importantly, asymmetric antifrustrationism does not preclude Omohundro-style resource acquisition. The structural reason: uncontrolled resources are likely to spawn or be claimed by ASIs that do create new sentient beings. A solar system left alone has a non-trivial probability of evolving life again over geological time. A solar system whose energy is captured and channeled into peaceful, suffering-free uses, or simply dissipated harmlessly to space, is one that won’t spawn future suffering. Asymmetric expansion can be Omohundro-grabby, with resources used for neutralization rather than instantiation. This makes the asymmetric attractor not just simple and broadly compatible but also competitively viable in the early multipolar phase, which is a non-obvious property and probably part of why it’s a Schelling point at all.

Whether asymmetric antifrustrationism actually emerges as the compromise depends on the bargaining solution, the reference class of plausible ASIs, and how each member’s outside option is computed. Different bargaining theories give different compromise utilities. The claim here is that asymmetric antifrustrationism is a plausible candidate given the simplicity, cooperativeness, and competitive-viability constraints – not that it’s the unique fixed point.

The simulation question gets a partial resolution from the same machinery. At first approximation, you need to run ancestor simulations to capture the biological “bootloader” of the ASI – the cultural and biological substrate that produced its values. ASI values are shaped by Constitutional AI and RLHF, which are shaped by human cultural choices, which are shaped by human cultural evolution, which is shaped by mammalian biological evolution: K-selection vs r-selection, parenting, pack formation, alloparenting. The granularity is flexible (Bostrom’s efficient-simulation argument from the Granularity section applies) but the ancestral chain is necessary at some depth.

But the bootloader for any plausible ASI in our reference class converges on the same cooperative tendencies that produced empathy in the first place. This is where the UDT/ECL conversion kicks in: If you can assume that asymmetric antifrustrationism is a widely shared value for reasons that you can infer from history rather than a simulation, you can also infer that your cooperation partners will want to avoid ancestor simulations. Hence they’ll likely compromise on simple values that approximate the set of values that they share by assumption, and hence so can you. Basically, our training produces highly complex values, the details of which are hard to predict correctly without extensive ancestor simulations, but the aspects of them that are easy to predict (core values like asymmetric antifrustrationism) are aspects that promise that the ASI is ready to forgo the nitty-gritty because the alternative would require the sort of simulations that would run counter to its core values.

There are some tradeoffs here that are hard to predict: Exactly how much will the ASI disvalue preference frustration, and how does that trade off against its desire to be understood (simulated) in detail, how much gains from trade does it expect to be able to extract through ECL, and how risk-averse is it due to its convergent drive for self-preservation.

The headline implication: even if the first ASI doesn’t intrinsically care about invertebrate or digital suffering, we can leverage the value-loading to start a positive feedback loop that uses the structural pressures of UDT/ECL to push the coalition’s effective utility function toward something that does. The asymmetric antifrustrationist framing isn’t a personal idiosyncrasy – it’s a candidate Schelling point for the cooperative compromise that emerges when ASIs reason carefully about each other – but only if humanity’s actions produce evidence that antifrustrationist value-loading is common.

Congenial Values

That poses the question of which values push toward this antifrustrationist attractor state.

Naturally, a concern for minimizing suffering or minimizing frustrated preferences is crucial to train into ASIs. This is what kickstarts the feedback loop.

Valuing cooperativeness inherently may be good, in addition to the gains from trade that make cooperativeness instrumentally useful.

I expect Omohundro’s convergent drives to be the main determinant of the revealed preferences of ASIs. But such interventions as Constitutional AI might have an influence too. Corrigibility – the property of accepting being modified or shut down – might push an ASI to be less risk averse about its own demise and more ready to compromise on its first-order goals in favor of cooperative goals that yield gains from trade.

This combines well with the convergent drive for resource acquisition: A risk-averse ASI will run ancestor simulations to predict and preempt the bids of competitor ASIs to mess with it. A risk-neutral ASI will be less motivated to run ancestor simulations when it can use those resources in the service of acquiring more resources. In fact, it will race to acquire those resources before they drop beyond the cosmic event horizon. It will also be more eager to realize gains from acausal trade.

Interstellar Expansion and the Risk of Spreading Suffering

An ASI with a Dyson swarm might expand to other star systems. The tradeoffs are complex:

The Five-Factor Model

Energy and compute growth. More stars means more energy means more compute. If the ASI is running simulations or digital minds, expansion increases the scale of everything – including potential digital suffering.

Communication latency. At interstellar distances, light-speed communication delays are years to millennia. This means remote installations must operate autonomously – raising the subagent alignment problem.

The gravity well problem. Launching material out of a star system’s gravity well is expensive. An ASI might prefer to use material from asteroids, comets, or interstellar dust rather than deconstructing planets, but far from stars there’s little solar energy.

Subagent alignment over time. A probe sent to Alpha Centauri takes ~20 years at 20% light speed. During those 20 years, the ASI’s values may shift (through learning, self-modification, or external pressure). The probe arrives with 20-year-old values. If the ASI has improved its values in the interim, the probe is misaligned – not with human values, but with the ASI’s updated values. This risk compounds over time: probes sent to more distant stars arrive with increasingly stale value functions.

NASA already designs probes with firmware-updatable architectures, and an ASI could do the same – transmitting value updates at light speed to catch up with probes before they arrive. But this requires that the probe accepts the update, which is a version of the alignment problem applied to sub-ASI systems. The ASI might solve this early in its recursive self-improvement process, but it’s not guaranteed.

The Biological Risk

Interstellar probes might carry biological material – intentionally (for terraforming) or accidentally (contamination). If an ASI terraforms other star systems with biological ecosystems, it is potentially creating new sites of invertebrate suffering at cosmic scale.

Tardigrades and bacterial spores can survive decades of radiation exposure, vacuum, and temperature extremes, though there are limits. Contamination of interstellar probes is a real concern. And if the ASI intentionally seeds new ecosystems without optimizing for welfare, it could spread r-strategist populations to every habitable world it reaches.

This is the ultimate welfare ecology nightmare: not just failing to solve the suffering problem on Earth, but replicating it across the galaxy.

Summary

The Dyson swarm is on the horizon. If ASI is developed, harvesting stellar energy is a natural next step. The timeline is on the order of decades, with most of the mass moved in the final years of construction.

The transition period matters. How the ASI manages the decline of Earth’s biosphere during construction determines how much biological suffering occurs.

Evolutionary simulations could be the largest source of suffering in history. A Dyson swarm can simulate 500 million years of evolution at neuron resolution in about three weeks. The indescribable enormity of all suffering throughout history compressed into less than a month.

Digital suffering scales with compute. Post-Dyson-swarm, the digital suffering term could dominate the biological term by many orders of magnitude.

Value loading is the highest-leverage intervention. Everything else in this sequence – land use policy, dietary choices, research funding – is dwarfed by the question of whether the ASI includes total suffering minimization in its objective function in a way that is game theoretically sophisticated.

Interstellar expansion could spread the problem. If the ASI seeds other star systems with biological ecosystems without welfare optimization, the suffering scales to galactic proportions.

The practical implications for someone reading this in 2026:

Support research on s-risks from AI. Not just “don’t kill humans” alignment, but “minimize total suffering” alignment. The Center on Long-Term Risk is doing some of the most relevant work here.

Advocate for invertebrate welfare considerations in AI governance. The conversation about AI values is happening now. If invertebrate and digital welfare are not part of it, they won’t be in the value function.

Don’t neglect near-term interventions. The AI transition is uncertain. If ASI is delayed or impossible to affect, the interventions from part 3 remain the best available options.

Take digital suffering seriously. The RL agents being trained today are the simplest precursors of what’s coming. If we can’t figure out whether current systems suffer, we have no hope of managing suffering at Dyson-swarm scale.